AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Semaphor storage limit9/19/2023  Thanks for your consideration.Įvent-driven architecture for semaphores and mutexes requires the lock feature to be implemented in transaction scope (currently they are supported only on a connection level). If you need more details, I would be happy to share relevant logfiles. You should see that they will get "stuck" behind the LongRunningTask jobs and even when there are worker threads available, they will delay for several minutes before they are picked up. Find and view the job details for any FastTask job. Then manually trigger the 'QueueFastTask` scheduled job once or twice. You may choose to do this more than once to get the queue bigger. To reproduce, trigger the 'QueueLongRunningTask' and allow it to move the tasks to scheduled. " ) ] public static void FastTask ( int x ) => Thread. _highAvailabilityServer = new BackgroundJobServer ( new BackgroundJobServerOptions Here is a quick repro case, starting with the package versions that were used in the test below. Changing this from a polling mechanism to an event-driven mechanism would probably increase throttled job throughput, while still enforcing the limitations I don't know if this is even possible, but I'd be willing to attempt it if you might consider a pull request? Then whenever a job with a throttle completes, a filter could pull up the next job from the 'Blocked' state and put in 'Enqueued'. I was curious if you have ever attempted to put throttled jobs in their own state 'Blocked' or 'Throttled' where they wouldn't clog up the 'Scheduled' state. I'd really like to see throttling work in a performant manner without carving this out into its own queue. Because of that pain, we purchased the 'Pro' version, moved everything to a single wider queue, and use throttling to govern our jobs. The most trivial option is to move it to its own queue, but previously I had found challenges having so many different queues, with different worker counts, all running their own BackgroundJobServers and the connections they require. This is exceptionally slow with Sql Server storage, and still painful with the speed Redis storage offers.

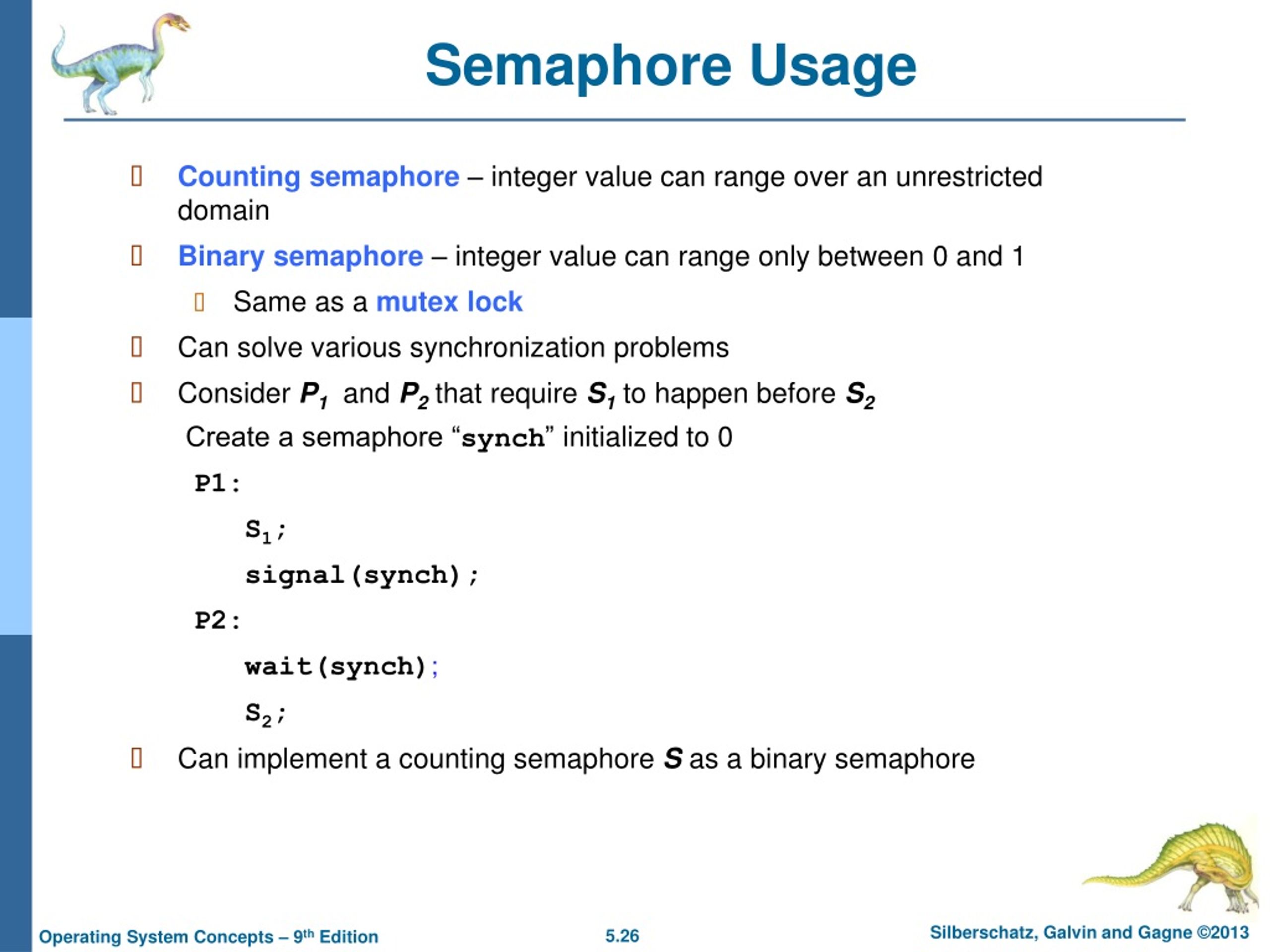

Every polling interval, the jobs go from Scheduled -> Enqueued -> Limited by the Semaphore -> Scheduled, and the time it takes to make all these changes causes the smaller, non-throttled jobs to get backed up.

What I've found though, is when the jobs are limited by the semaphore, they get put back into the "Scheduled" bucket for a retry. But now I have a new use-case where I'd like to schedule 100,000 jobs, and have them limited to 10 running concurrently, and just work them throughout the day. I use throttling to limit jobs by type, so one task does not preempt other waiting tasks. Generally, all the tasks in this queue run fairly fast so I throw everything in there. I have a queue, which currently runs 20 threads on 2 servers, for a max of 40 concurrent jobs. I have found a challenging use-case when using Throttled jobs, and I fear it may be by design.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed